|

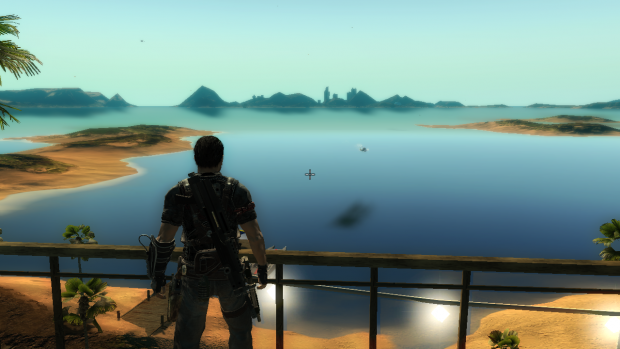

4/16/2023 0 Comments Just cause 2 mods website down

Those numbers sound terrible until you realize that the 10% of experiments that totally broke the product got taken down within minutes by automated systems, the 40% that made the product significantly worse got shut down within a day when analysts saw the numbers trending bad, the 40% that made the product slightly worse only affected 1% of users for a week, and the 10% that made the product better were launched and benefitted all users forever. However there are clear benefits in finding ways to judge potential changes as quickly as possible.īeing stupid really fast often beats being thoughtful really slowly.Ģ probably 10% of experiments totally break the product in a way that makes it unusable, 40% of them make the product significantly worse in some easily measurable way, 40% of them make the product worse in some minor or inconclusive way, and 10% of them make the product better.

“Not everything that counts can be counted”, and it’s hard to measure things like “is this morally right, or improving people’s lives” using this kind of mechanism. There are of course disadvantages to requiring that your metric be something simple and easily measured. Indeed it was a process like this that caused the transition from “time spent” as the core metric to “meaningful social interactions”. However the expected thing to do if you have concerns about bad launches isn’t to hold up your launch (except in extreme circumstances), but to pass the concern onto the data science team, who use those concerns to craft updated new metrics that allow teams to continue to iterate fast, but with a smaller number of launches making the product worse. That doesn’t mean that concerns about bad launches were completely ignored. The assumption is that it’s worth the cost of shipping some changes that make the product worse if it allows the company to iterate faster. When I was at Facebook, it was common for engineers to have suspicions that the changes they were shipping were actually making the product worse, but the cultural norm was to ship such changes anyway. The problem with requiring wise people to think deeply about whether a change is good is that thinking deeply is slow. Facebook thus places great importance on having very simple engagement-based metrics that can be used to judge whether a launch is good based on data from a short experiment, rather than requiring wise people to think deeply about whether a change is actually good. Changes that improve the engagement metric are kept, and so the product improves, as judged by that metric.Ī key part of Facebook culture is that it’s more important to iterate rapidly than to always do the right thing. Facebook also has a “cost function” in terms of a simple, easily measured engagement metric that is used to guide iteration.įacebook the company created it’s product by starting with something very simple and then having tens of thousands of employees make rapid iterative changes to the code. Like GPT, that code is too huge and messy for any engineer to truly understand how it works. Like GPT’s parameterized model, Facebook has a “representation” of its product in the form of its source code. We can see a similar pattern in the structure companies like Meta/Facebook (where I worked as a Product Manager) use to direct the action of their employees.

In the rest of this post I’ll look at what this means in practice, drawing on examples from machine learning, metric-driven development, online experimentation, programming tools, large scale social behavior, and online speech. Those structures ensure that iteration is fast, that each iterative step has low risk of causing harm, and that iteration progresses in a good direction. For productive evolution to happen, you need to have the right structures to direct it. You can’t just pour out a pile of DNA, or people, or companies, or training data, and expect something good to magically evolve by itself. Matt Ridley has argued that a similar iterative evolutionary process is responsible for the creation of pretty much everything that matters.īut this kind of productive evolution doesn’t just happen automatically by itself. OpenAI created GPT-4 by having a vast number of “stupid” GPU cores iterate very fast, making a series of tiny improvements to an incredibly complex model of human knowledge, until eventually the model turned into something valuable. GPT-4 is an example of a broader class of valuable-but-incomprehensible things that includes the world economy, the source code for large products like Google Search, and life itself. The launch of GPT-4 got me thinking about how humans create valuable things that are too complex to understand.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed